From Labs to People: A Paradigm Shift in AI Research — Insights from a Systematic Review

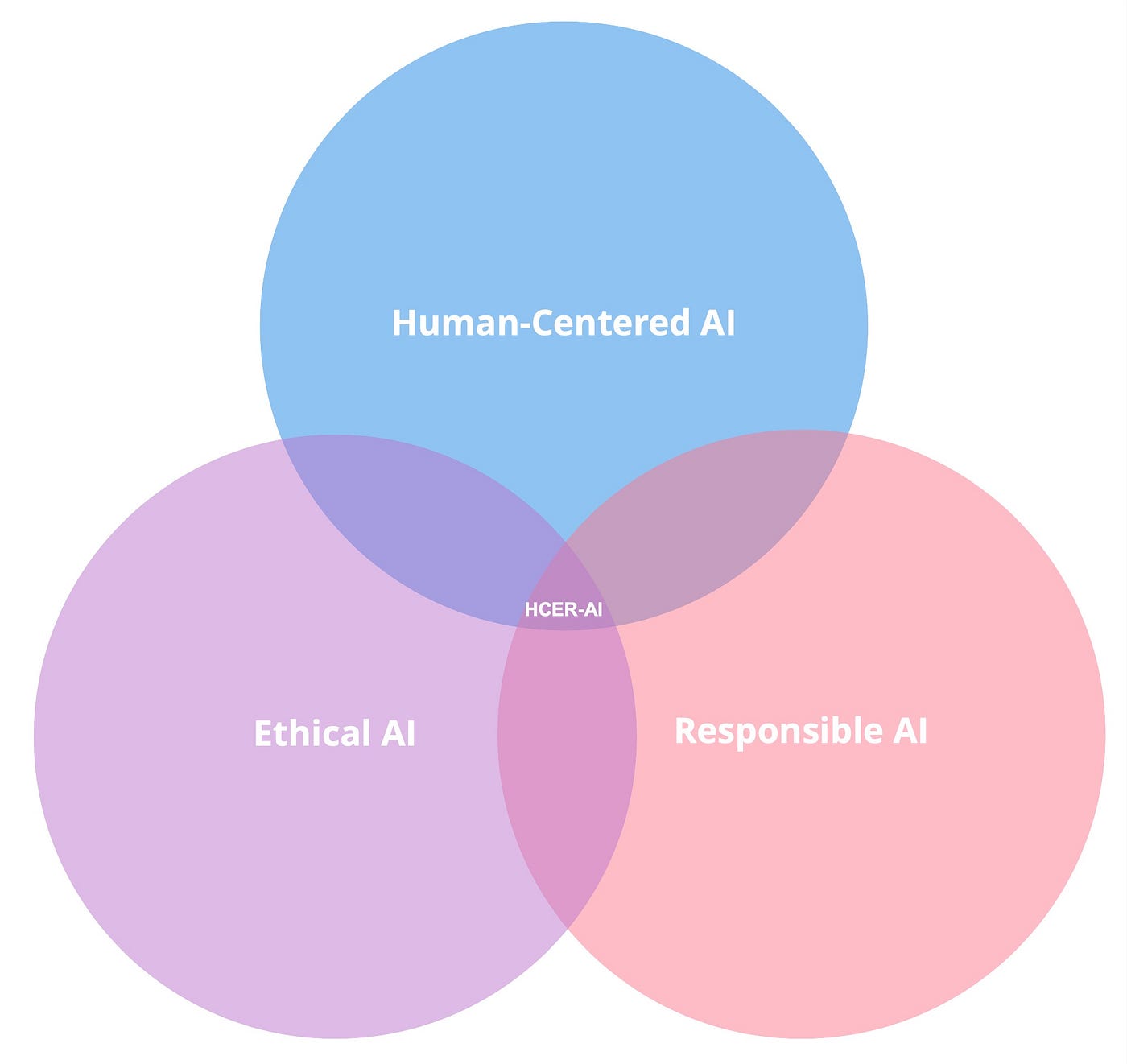

Last week, I concluded a systematic literature review alongside three great colleagues — Marios Constantinides, Daniele Quercia, and Michael Muller — on Human-Centered, Ethical, and Responsible AI (HCER-AI), which serves as a compass guiding us through the research terrain.

This convergence of interests deals with the critical task of designing, building, and deploying AI systems that align with our expectations. It focuses on creating AI systems that people can control and understand to augment our capabilities and enrich our societies.

What is a systematic literature review? A systematic literature review paints a comprehensive portrait of a research area. It uncovers what has been accomplished and what areas are yet unexplored, guiding researchers and policymakers toward productive avenues of exploration.

In our analysis, we studied 164 HCER-AI research papers published in four premier, highly competitive research conferences. These papers undergo rigorous scrutiny by at least three independent researchers who vet their quality and relevance. As a result, the compilation of these studies offers an exhaustive overview of current AI research trends, specifically those concerning ethical and responsible AI development. Noting that both academic and industry researchers — like those from tech giants Google and Microsoft — present their latest findings at these conferences.

Exponential growth and interest

We observed an impressive, almost exponential growth in this field of research — from a mere 4 papers in all of 2018 to a remarkable 45 papers in just the first five months of 2023!

So, what are researchers doing?

Research efforts are primarily geared toward understanding AI’s general impacts and how best to regulate and govern it. This trend isn’t surprising, given the academic propensity to challenge the status quo and guide technological advancements. Numerous papers offer blueprints for conducting ethical AI research. For instance, the influence of industry on academia and how it shapes research, though not a novel topic, has been reinforced by studies examining faculty funding.

What seems to be missing?

Despite public apprehension about AI safety, research areas addressing this concern, such as privacy, security, and human welfare, do not seem to top researchers’ priority lists.

What’s needed?

Expanding the research portfolio beyond the popular or currently trending topics is key. Listening to the voice of AI users and those affected by AI — job applicants, patients receiving AI-based diagnoses, and individuals denied loans due to AI-derived decisions — is essential.

AI research needs to transition from a research-centric mindset to a people-centric mindset. I find the notion of transforming our labs and crowdsourcing websites into open-door research studios — akin to science museums — a nice way to start! Individuals and children can interact with emerging technologies and concepts, providing invaluable feedback during the early design stages.

Endnote: I fully recognize the value of conducting science for science’s sake. However, when people are directly impacted by research outcomes, their voices should be heard in the discourse!